In the digital era, data has become the lifeblood of innovation, fuelling artificial intelligence systems that can diagnose diseases, predict stock trends, and personalise user experiences. Yet, this abundance of information comes with a challenge — privacy. What if you could train a powerful AI model without ever moving data from its original location? That’s the vision behind Federated Learning, a concept that treats data like gold stored in individual vaults — valuable, but never to be moved.

This approach is revolutionising how companies and institutions collaborate, allowing them to build intelligent systems together without sacrificing confidentiality.

The Metaphor: Learning Without Leaving Home

Imagine a group of students working on a shared science project. Instead of gathering in one classroom, each works from their home, performing experiments and recording results. A central teacher collects only the summaries of their findings, not the raw data. The teacher then combines these learnings to improve the overall project outcome.

That’s how federated learning works. The central model plays the role of the teacher, while individual devices or institutions act as students. They train local models on their data and share only model updates, not the data itself. This process ensures both collaboration and privacy — a balance that traditional centralised learning systems often struggle to achieve.

For professionals aiming to explore this blend of innovation and ethics, joining an AI course in Bangalore provides first-hand exposure to how federated learning is transforming data collaboration and security in the modern world.

How Federated Learning Solves the Privacy Puzzle

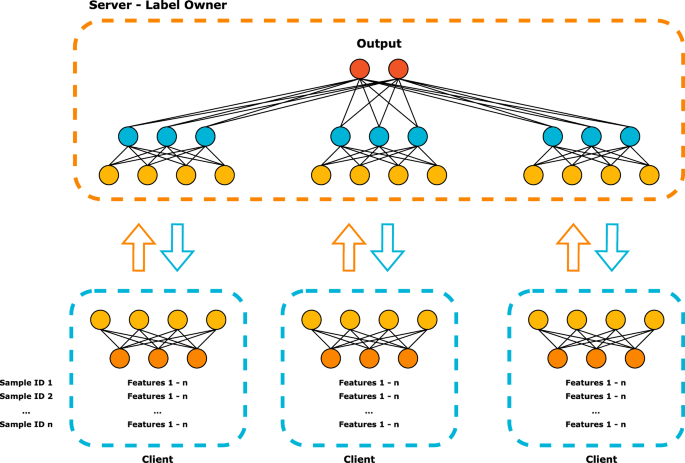

In a typical machine learning setup, all data is aggregated into a central location for training. While efficient, it poses significant privacy and compliance risks — particularly in sensitive domains like healthcare and finance. Federated learning flips this approach by decentralising the process.

Each participating node (hospital, bank, or smartphone) trains the model locally and sends encrypted updates back to the central server. This technique uses algorithms such as Federated Averaging to combine results and update the global model without ever accessing raw data.

Think of it as creating a shared intelligence system that learns collectively but keeps its secrets locked away. This ensures compliance with regulations like GDPR and HIPAA, which demand strict data-handling protocols.

The Power of Cross-Silo Collaboration

When organisations across sectors collaborate, the potential of federated learning becomes extraordinary. For example, multiple hospitals can train a model to detect early signs of disease by sharing algorithm updates rather than patient records. Financial institutions can jointly train fraud detection models without disclosing transaction data.

This cross-silo learning ensures that models are both robust and generalised, benefiting from the diversity of data while respecting privacy. It’s a win-win situation — better models without compromising ethics.

Professionals taking up an AI course in Bangalore can experiment with federated learning frameworks like TensorFlow Federated or PySyft to see how secure model training happens across distributed environments, preparing them to implement these systems in real-world projects.

Technical Foundations: Keeping the System Secure

At its core, federated learning relies on several privacy-preserving techniques that make this distributed approach viable:

- Differential Privacy: Adds random noise to model updates, ensuring that individual data points cannot be traced.

- Secure Multiparty Computation (SMPC): Allows participants to compute joint results without revealing their private inputs.

- Homomorphic Encryption: Enables computations on encrypted data so that the central server never sees the actual information.

These methods act as invisible shields, securing the integrity and confidentiality of every participant. While the mathematics behind them is complex, their outcome is simple — safer, more ethical AI systems.

Challenges in Federated Learning

Despite its promise, federated learning is not without hurdles. Data stored in different silos can vary in quality, format, and distribution. Communication overheads may slow down model updates, and ensuring synchronisation between participants can be difficult.

Moreover, securing the model itself against potential adversarial attacks — where malicious clients may send false updates — remains an area of active research.

Yet, these challenges represent opportunities for innovation. As more organisations invest in privacy-preserving AI, advancements in encryption, communication protocols, and distributed computing continue to make federated learning more practical and scalable.

Conclusion: The Future of Privacy-Preserving AI

Federated learning is more than a technical framework — it’s a philosophical shift in how we approach collaboration and privacy. It proves that progress doesn’t have to come at the cost of protection.

In the near future, AI models will increasingly be built across borders and organisations, learning from millions of decentralised data sources without ever compromising trust. For professionals and students alike, mastering these technologies offers a pathway into the most responsible and future-ready form of AI development.

Just as bridges connect distant lands without moving the earth beneath them, federated learning connects data ecosystems without displacing the data itself — a true harmony of intelligence and privacy.